This project aims to colorize One Piece manga pages using GANs. I plan on experimenting more with CycleGAN and ACL-GAN implementations, as the supervised pix2pix GAN requires too much time matching the raw and colored manga pages together. The current implementation I have completed uses the colored pages and the grayscale of the colored pages as the training pairs, which of course does not translate perfectly to the raw manga pages. Just removing miscellaneous images (indexes, author's notes, covers, etc.) from the colored pages dataset took upwards of 2 hours. Because the order and inclusion of these miscellaneous images is not consistent between the raw and colored pages, pruning them both for supervised training is unrealistic (more accurately, I would rather just implement an unsupervised model because I'm too lazy). For copyright reasons, I will not include the datasets or the scripts I used to scrape said datasets in this repo.

Because the series is so long, the art style and environments change drastically over time. Here I will showcase some examples. There may be some mild spoilers (nothing too important though), so if you care at all, turn away now.

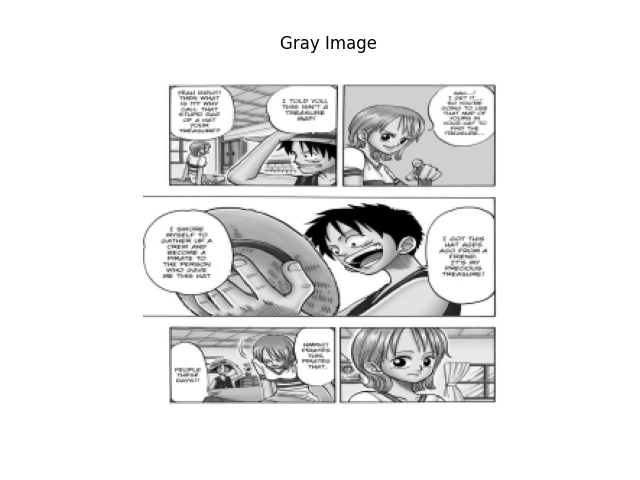

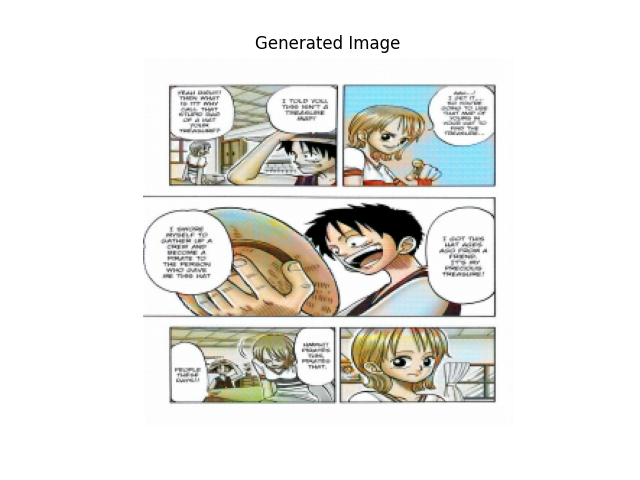

This page is pretty early in the series (chapter 9). The generator gets most of the colors correct (with some strange streaks of discoloration). This page is relatively simple, and the results are pretty good. The colors overall look a bit washed, however. Perhaps a larger lambda value would fix it?

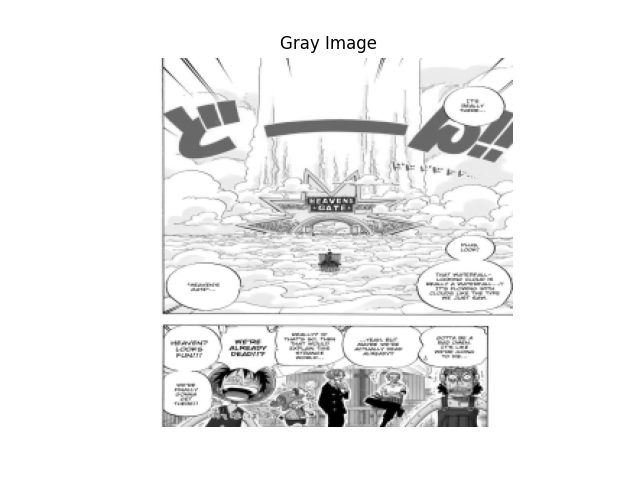

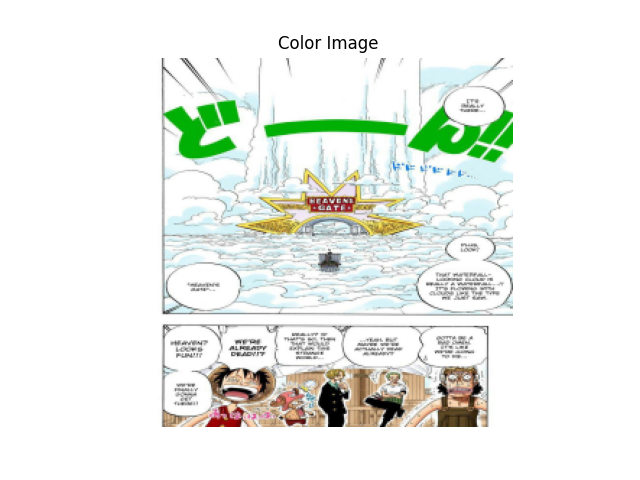

Here, the gang is on a sky island (chapter 238 -- much further into the series). Suprisingly, the generator gets the color of the clouds correct, which is a very pleasant surprise. Like the previous example, the generated output colors are slightly grayer than they should be.

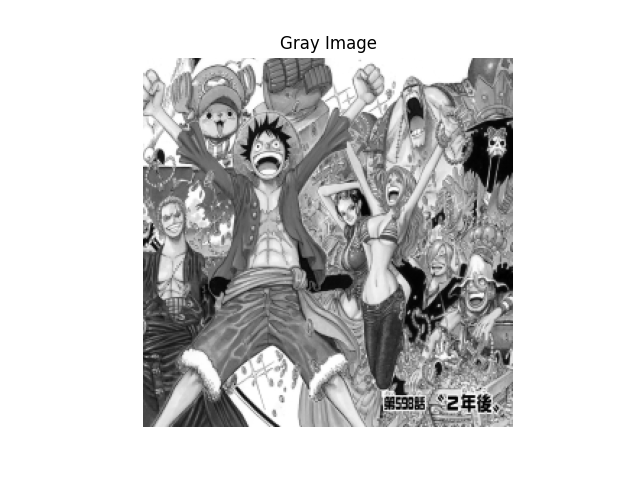

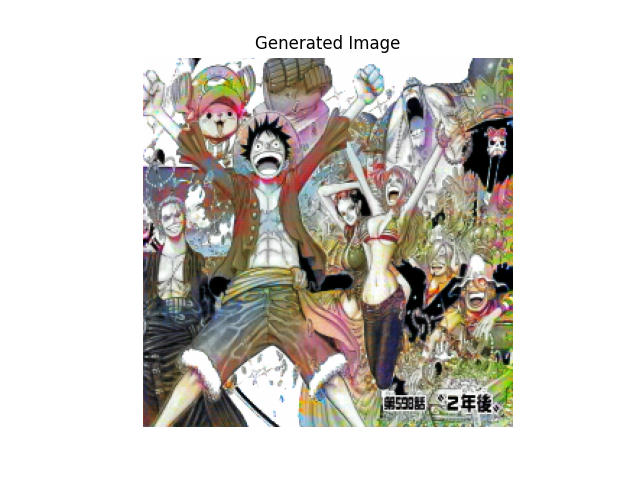

This image is the chapter cover for chapter 598. Chapter covers with this much detail are rare (maybe around 30ish in total?), so it's unsurprising that the model struggled with this one. The colors are mostly correct, but, like the last two, are slightly washed out.

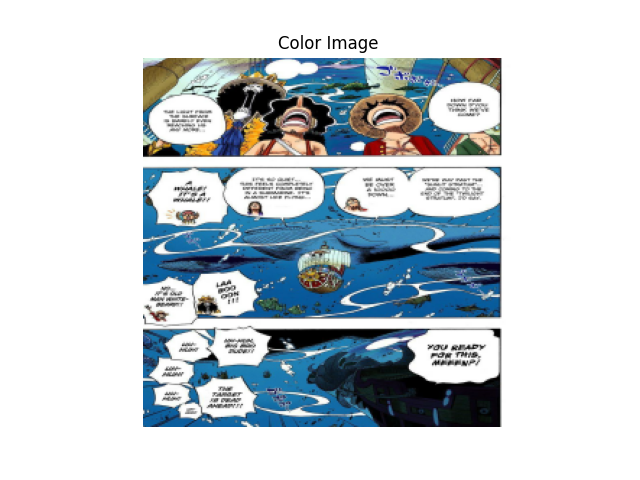

All of a sudden, in chapter 604, the gang is underwater! As expected, the generator struggled with this one. I have no clue what it thinks the water is, but it looks red for some reason.

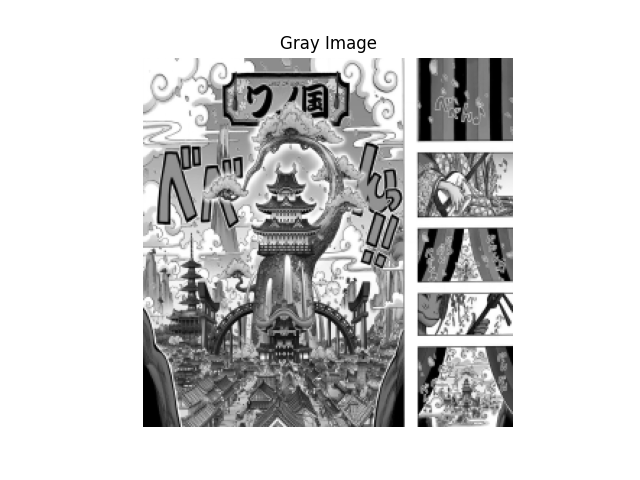

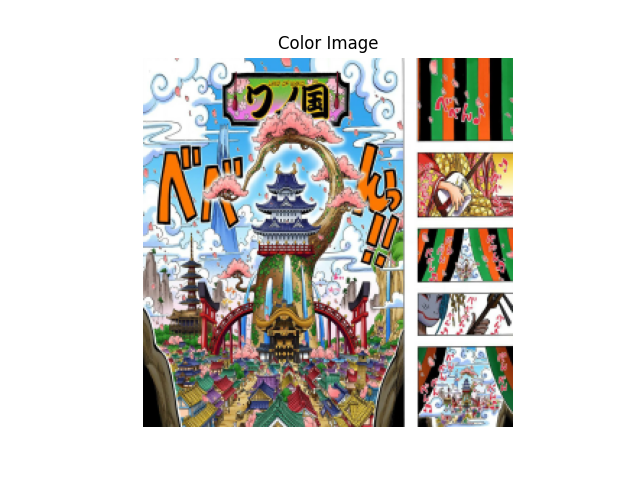

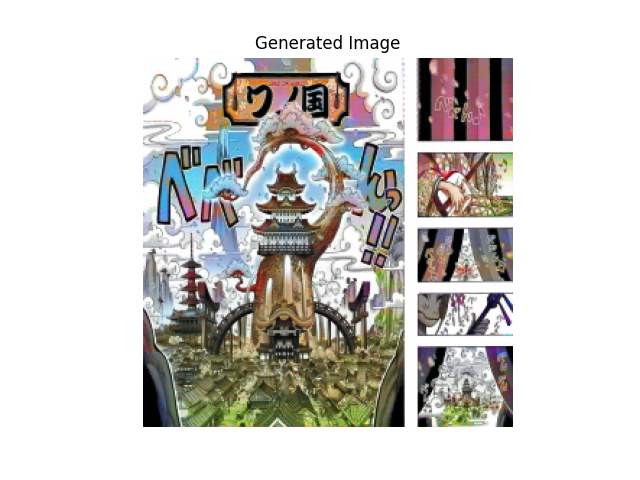

Just for fun, I wanted to see what the generated image would look like for one of the most intricate pages in the entire series all the way at chapter 909. The generated image is not nearly as colorful as the colored, but it still looks pretty cool!

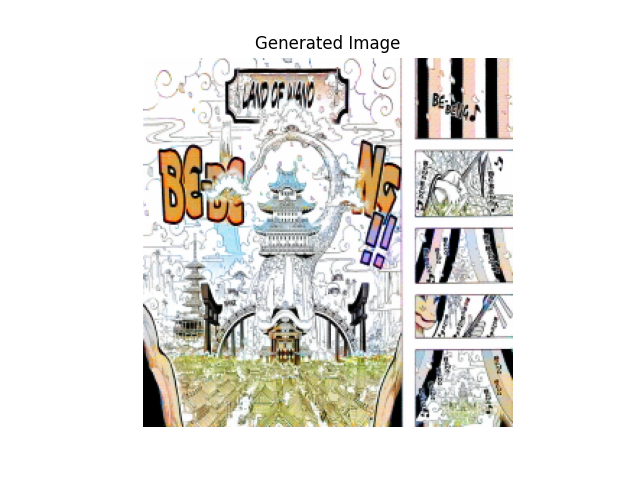

As I mentioned before, the supervised method does not translate well to the original pages at all. Here's what the generated output looks like using the raw page.

I surmise that the lack of shading is confusing the generator, as it relied on the implicit information given by the grayscale. Perhaps that's why the sky island example looked so good.

My next goal is to try training the model on more specific subsets of the manga. For my examples, I trained the model using all 900+ chapters that I scraped. The evolution of the style and the changing environments may be influencing the model in unexpected ways. I may also experiment with the lambda value; the value given by the original paper may not be the best suited for this dataset.

After that, I will try CycleGAN and ACL-GAN for raw page to color page conversion.

All the theoretical details relating to pix2pix GAN can be found in this paper: https://arxiv.org/abs/1611.07004