This project involves building a Convolutional Neural Network (CNN) to classify images of Pakistani celebrities. The dataset was collected using the Chrome Extension "Download All Images" and underwent several preprocessing steps before model training.

- OpenCV (

cv2) - NumPy

- Matplotlib

- os

- shutil

- TensorFlow

- Keras

- imghdr

All necessary libraries are imported at the beginning of the script for clarity and reproducibility.

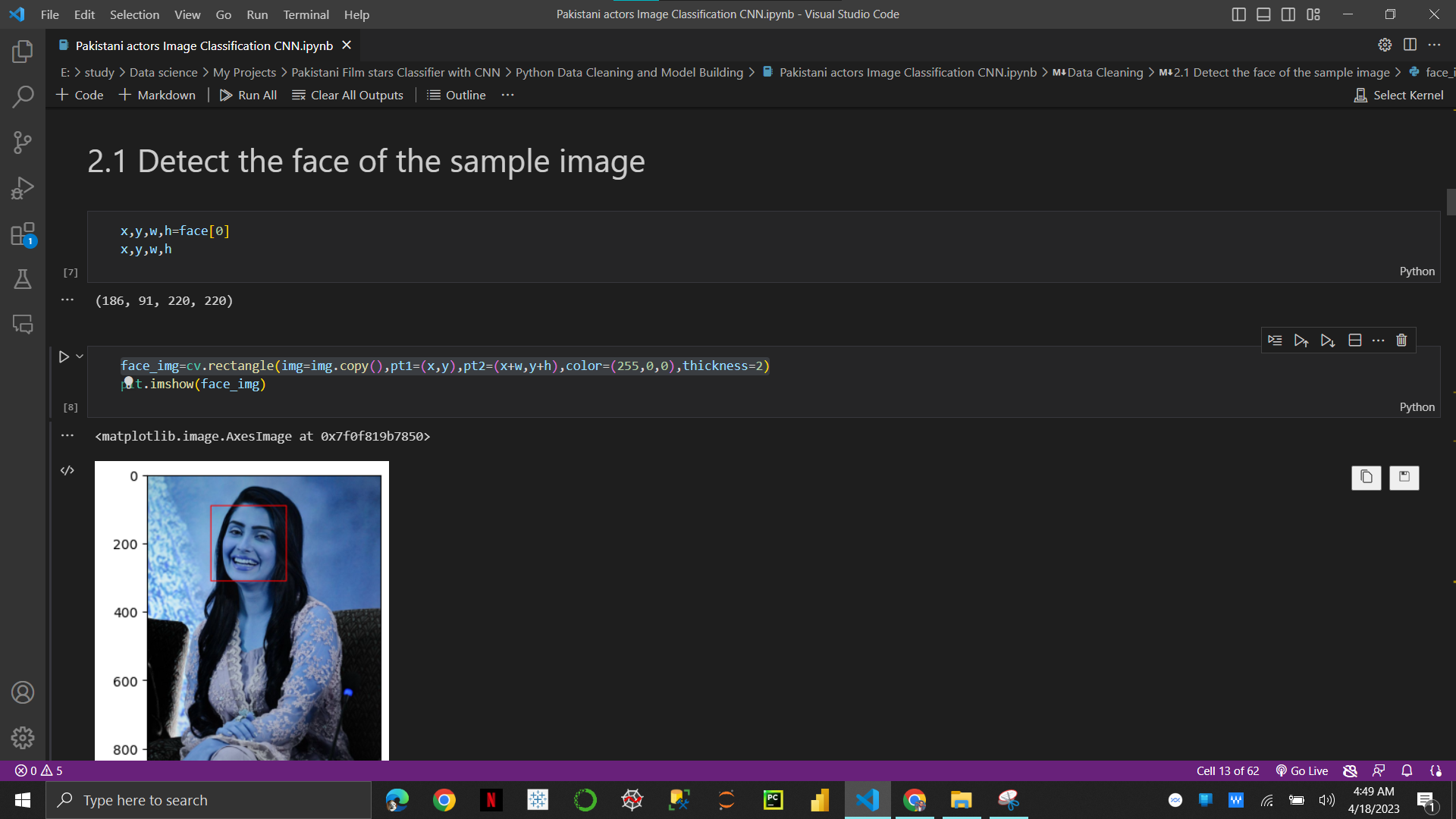

Data cleaning is essential for preparing the dataset for training. This step involves:

- Utilizing pre-trained Haar Cascade XML files to detect and crop faces in images.

- Deleting images where the cropped face does not belong to the intended celebrity.

- Removing blurry or low-quality images that may hinder model performance.

A preprocessing function is applied to handle images with incorrect formats and ensure uniformity in the dataset.

The dataset is processed into batches using TensorFlow's Keras utilities to streamline model training.

Images are scaled to a consistent size to facilitate model training, as CNNs typically require inputs of uniform dimensions.

The dataset is divided into training, testing, and validation sets with proportions of 70%, 20%, and 10%, respectively.

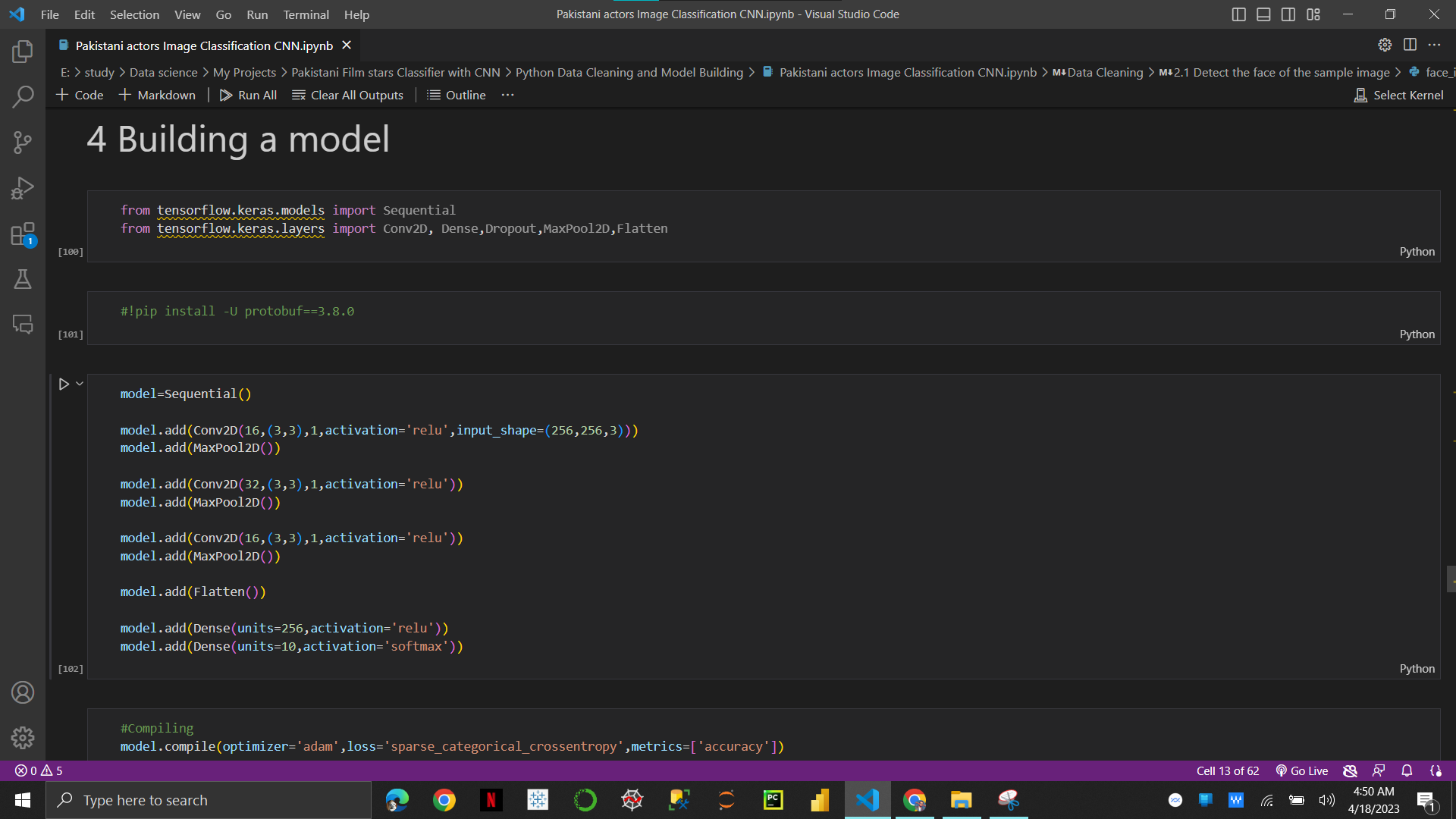

The CNN architecture consists of:

- Six convolutional (

Conv2D) layers followed by max-pooling layers for feature extraction. - A flattening layer to transform the data into a 1D array.

- Two dense (

Dense) layers for classification, with the output layer using the softmax activation function for multi-class classification. The model is compiled with sparse categorical cross-entropy loss and the Adam optimizer.

The model demonstrates high accuracy, achieving 98.44% accuracy with minimal loss (0.06).

git clone https://github.com/your-username/your-repository.gitcd your-repositorypip install -r requirements.txtpython main.py